What is a Data Structure?

When we think of a "structure" we often think of architecture, but data also often has structure. There are many different types of data structures: arrays, graphs, queues, stacks, and so on. We use these structures in order to be able to effectively store and access the data. Sometimes one data structure will be very effective, while in another situation not so much. Knowing and understanding the advantages and disadvantages of the most common data structures will help you more easily decide which to use, according to a given situation.

What Is an Algorithm?

An algorithm is really just a fancy word for a set of specific instructions for the computer to follow. There are tons of different algorithms: ones to find the shortest path in a graph, ones to find the root of a quadratic equation and more commonly associated with data structures, ones that sort data. A flow chart, for example, is an algorithm (set of instructions to solve a problem):

Describing Behavior

When we talk about data structures and algorithms, we like to be able to effectively quantify precisely each's time and space usage (or behavior). Computer scientists/mathematicians often use something called Big-Oh Notation.

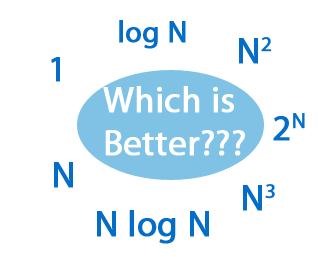

The most common behaviors are shown below. This blog post summarizes each fairly well and is worth checking out: Running Time Complexity Functions Summarized.

All of these simply say the general amount of time an algorithm will take. We write each in big-oh behavior like so: O(N), pronounced "order + ehn". You can also call it "linear". Likewise, O(logN) is logarithmic, O(N^2) is quadratic, etc... You will understand how we determine this later after you have seen a concrete example, so don't worry too much. N can mean whatever you define it as, but it's often defined as the problem size or number of things you are working with, e.g. if you have 5,000 words that you want to sort alphabetically, then N=5,000.

Common Data Structures

Before we jump into anything too much more complicated, let's first get acquainted with some of the most common data structures. I am most familiar with Java, so I may draw knowledge specific to that programming language, but most of these data structures exist in other object oriented programming languages such as C++, Visual Basic, and many others.

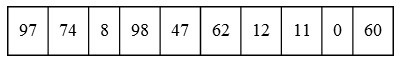

Arrays: An array is a series of values associated with a numerical index. Array values are usually stored contiguously (right next to one another) and start with index 0. Let's look at an example array:

We would have: array[0] = 97, array[1] = 74....array[9] = 60. Each value has an associated index which has a memory address that can be calculated from that index. This makes it really easy to access an element/value at any arbitrary index. Retrieving any value requires constant O(1) time. Note that arrays usually cannot grow dynamically in size, so if for example you wanted to add another element to the above array, you would need to declare a new array and copy over all the elements to the new array, which takes linear O(N) time. This doesn't mean much unless we have something else to compare it to, so let's look at another data structure.

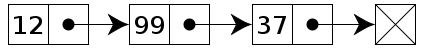

Linked-List: The formal definition is "a data structure consisting of a group of nodes which together represent a sequence." Like arrays, linked lists have indexes, but are accessed by iterators. In the linked list below, the head is "12", which is where the iterator always begins. Suppose we have a linked list object called "list", then list.head = 12 and list.head.next = 99. The last node is called the tail and is always "null" (nothing there).

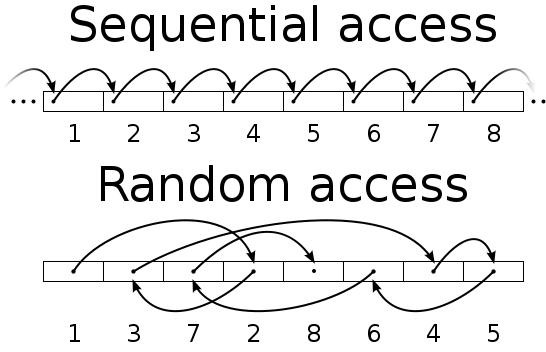

Linked lists have sequential instead of random access because we must always start at the head (index 0) and work our way forward until we get to the desired index, O(index) time. This is one major disadvantage of linked lists. Additionally, linked lists require more memory because references/links take up space.

On the other hand, it is really easy to add an element at any spot. To do this in an array, we often have to shift or copy some or all the elements, which can be costly. In a linked list, you simply redirect the links to the new element, thus if you have a situation in which adding and removing at random is required, then you probably should use a linked list data structure.

In order to make access at the end of a linked list as efficient as at the front, we create what is called a doubly linked list. We simply just add links going from the tail to the head, then whichever half of the list we are on determines whether we start at the head or tail. This will require more space, but usually the performance boost is worth it.

There are half a dozen other common data structures that would be useful to know, but I don't want to make this article obscenely long, so we will stop here and continue in a later article. I will also probably write up some articles focusing on algorithms since we mostly covered data structures here.

If you have any questions just comment below, post in the forum or send me a message!

Follow us on:

Just updated your iPhone? You'll find new emoji, enhanced security, podcast transcripts, Apple Cash virtual numbers, and other useful features. There are even new additions hidden within Safari. Find out what's new and changed on your iPhone with the iOS 17.4 update.

1 Comment

That number looks like a note.

Share Your Thoughts